AI and Human Emotions: Can Machines Understand Us?

H1: Introduction

- Definition of AI and emotional intelligence

- The rising question: Can machines understand human emotions?

H2: Understanding Human Emotions

- H3: What Are Emotions?

- H3: The Science Behind Human Emotions

- H3: Role of Emotions in Daily Life

H2: Emotional Intelligence (EQ) vs Artificial Intelligence (AI)

- H3: What is Emotional Intelligence?

- H3: How AI Differs from EQ

- H3: Bridging the Gap Between AI and EQ

H2: The Evolution of Emotion-Aware AI

- H3: Early Attempts at Emotion Recognition

- H3: Modern Technologies Used in Affective Computing

- H3: Emotion AI in Consumer Devices

H2: How Machines Detect Emotions

- H3: Facial Recognition

- H3: Voice Analysis

- H3: Text and Sentiment Analysis

- H3: Physiological Signal Detection

H2: Real-World Applications of Emotion AI

- H3: AI in Mental Health

- H3: AI in Customer Service

- H3: AI in Education

- H3: AI in Marketing and Advertising

H2: Limitations and Challenges

- H3: Contextual Understanding

- H3: Cultural and Individual Differences

- H3: Ethical Concerns

- H3: Accuracy and Bias

H2: Can AI Truly Understand Emotions?

- H3: Surface-Level Recognition vs Deep Understanding

- H3: Empathy: Can It Be Programmed?

- H3: Consciousness vs Simulation

H2: The Future of Emotion AI

- H3: Research and Development

- H3: Collaboration Between Psychologists and Technologists

- H3: AI Companions and Emotional Support Bots

H2: Conclusion

- Summary of insights

- Final thoughts on AI’s emotional potential

H2: FAQs

- What is emotional AI?

- Can machines feel emotions?

- How does AI detect human feelings?

- Is emotion AI used in therapy?

- What are the dangers of emotion-aware AI?

AI and Human Emotions: Can Machines Understand Us?

Introduction

Let’s face it—machines are getting smarter every day. They can drive cars, write poetry, and even crack jokes (well, kind of). But here’s the real kicker: can they understand how we feel?

It sounds like something from a science fiction film when artificial intelligence (AI) is able to comprehend human emotions. Yet, with advances in machine learning and affective computing, it’s becoming more of a reality. The burning question is: Can machines truly understand us on an emotional level?

Understanding Human Emotions

What Are Emotions?

Emotions are complex psychological states that consist of three primary components: a subjective experience, a physiological reaction, and a behavioral or expressive reply. In simple terms, it’s how we feel and react to situations.

The Science Behind Human Emotions

Neuroscience tells us that emotions are deeply tied to brain regions like the amygdala, hippocampus, and prefrontal cortex. These parts help us evaluate situations, assign emotional meaning, and react accordingly. Emotions aren’t just feelings—they’re data.

Role of Emotions in Daily Life

Emotions influence everything: our decisions, relationships, work, and even our health. Imagine living a day without feeling anything—no joy, no frustration, no excitement. Emotions are essential to being human.

Emotional Intelligence (EQ) vs Artificial Intelligence (AI)

What is Emotional Intelligence?

The ability to recognize, comprehend, regulate, and control emotions—both your own and those of others—is known as emotional intelligence (EQ). It’s what makes someone a good leader, friend, or partner.

How AI Differs from EQ

AI doesn’t have feelings. Instead, it analyzes patterns, interprets data, and reacts based on pre-defined parameters. Unlike EQ, AI doesn’t “feel” emotions—it simulates understanding them through logic and machine learning.

Bridging the Gap Between AI and EQ

The field of affective computing attempts to narrow this gap. By teaching machines to recognize and respond to emotional cues, developers are bringing AI closer to mimicking emotional intelligence.

The Evolution of Emotion-Aware AI

Early Attempts at Emotion Recognition

Remember clippy from Microsoft Word? Primitive attempts at emotion detection started with rule-based systems that tried (and often failed) to detect user frustration or confusion.

Modern Technologies Used in Affective Computing

Today’s AI uses deep learning, natural language processing (NLP), and multimodal data analysis to recognize emotions in real time. Compared to Clippy, it’s much more sophisticated and less… obnoxious.

Emotion AI in Consumer Devices

Smartphones, fitness wearables, and even smart speakers like Alexa are being equipped with emotional recognition capabilities. Your phone might soon detect your mood before even you realize it.

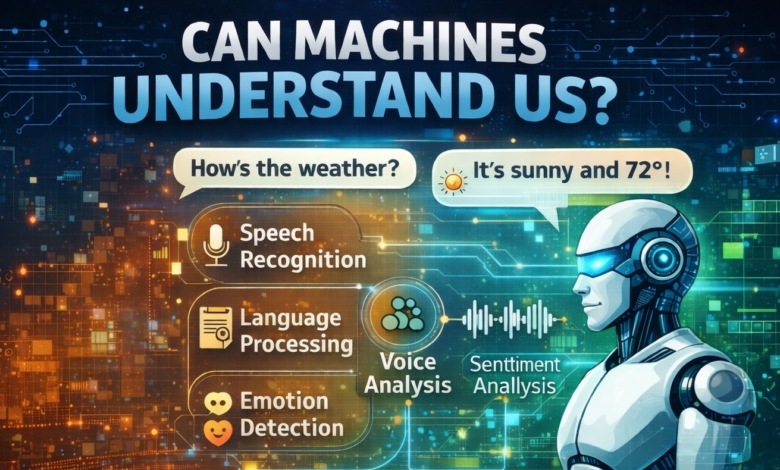

How Machines Detect Emotions

Facial Recognition

AI can determine whether a person is happy, sad, furious, or shocked by examining their microexpressions. Tools like FaceReader and Affectiva are already using this in security, marketing, and research.

Voice Analysis

Ever noticed how tone changes with emotion? AI picks up on pitch, speed, pauses, and inflections to decode emotions from speech. It’s how your smart assistant knows when you’re stressed.

Text and Sentiment Analysis

AI scans emails, tweets, reviews, and more to pick up on sentiment. Whether you’re raving or ranting, machines can usually tell the difference through keyword and context analysis.

Physiological Signal Detection

Some emotion-detecting wearables monitor heart rate, skin conductance, and even brainwaves. These subtle cues provide additional layers of emotional data for machines to interpret.

Real-World Applications of Emotion AI

AI in Mental Health

AI-powered chatbots like Woebot or Wysa provide mental health support by recognizing emotional distress and offering coping mechanisms. They’re not therapists—but they’re helpful companions.

AI in Customer Service

AI agents analyze customer tone and sentiment during chats or calls. This helps companies better respond to complaints and improve customer satisfaction.

AI in Education

Emotion-aware AI tools in classrooms can detect student engagement levels, boredom, or confusion. Teachers can then tailor instruction more effectively.

AI in Marketing and Advertising

Marketers use emotion AI to gauge consumer reactions to ads, websites, and products. If a commercial makes people smile, AI can track it and optimize future campaigns.

Limitations and Challenges

Contextual Understanding

A person laughing might be genuinely happy—or sarcastic. Misunderstandings may result from AI’s frequent inability to understand context.

Cultural and Individual Differences

Facial expressions and emotional reactions can vary across cultures and individuals. A smile in Japan might signal discomfort rather than joy. AI still struggles with these nuances.

Ethical Concerns

Emotion AI raises serious questions: Is it ethical to track someone’s feelings without consent? Could it be used for manipulation, like emotional surveillance?

Accuracy and Bias

Emotion-detecting algorithms can be biased—especially if trained on non-diverse datasets. This may result in biased decisions and erroneous emotional readings.

Can AI Truly Understand Emotions?

Surface-Level Recognition vs Deep Understanding

Right now, AI can recognize emotional signals, but it doesn’t feel them. It can mimic empathy, but not experience it.

Empathy: Can It Be Programmed?

Empathy involves shared experience, not just pattern recognition. AI can simulate empathy in responses, but it doesn’t “get” what you’re feeling the way a human does.

Consciousness vs Simulation

True emotional understanding may require consciousness—something AI doesn’t have. At best, it’s an expert imitator, not an emotional being.

The Future of Emotion AI

Research and Development

Researchers are exploring more sophisticated emotion models and combining AI with neuroscience. There’s potential—but we’re still far from emotional machines.

Collaboration Between Psychologists and Technologists

For emotion AI to succeed, collaboration is key. Psychologists bring insight into emotional behavior, while technologists bring tools to interpret it.

AI Companions and Emotional Support Bots

The future may see AI companions that offer emotional support—especially for elderly or socially isolated individuals. Whether they can replace human connection is still up for debate.

Conclusion

So, can machines understand us emotionally? Kind of—but not really. Although AI is capable of recognition, response, and even comfort, it lacks true emotion. Emotion AI still has a long way to go, despite its enormous potential in industries like marketing, healthcare, and education.

We’re teaching machines to read emotions, but emotional understanding—the kind rooted in human experience—remains uniquely ours.

FAQs

1. What is emotional AI?

Emotional AI, or affective computing, refers to systems designed to recognize and respond to human emotions using data like facial expressions, voice tone, or physiological signals.

2. Can machines feel emotions?

No, machines can’t feel emotions. They can mimic comprehension and react accordingly, but they lack human-like emotional experiences.

3. How does AI detect human feelings?

AI uses tools like facial recognition, voice analysis, and sentiment detection in text to infer emotional states based on patterns and data.

4. Is emotion AI used in therapy?

Yes, AI chatbots and mental health apps use emotional recognition to provide supportive conversations, though they are not a replacement for licensed therapists.

5. What are the dangers of emotion-aware AI?

Privacy invasion, emotional manipulation, algorithmic bias, and misuse in surveillance are key concerns surrounding emotion AI.